Modular Alpha Deployment

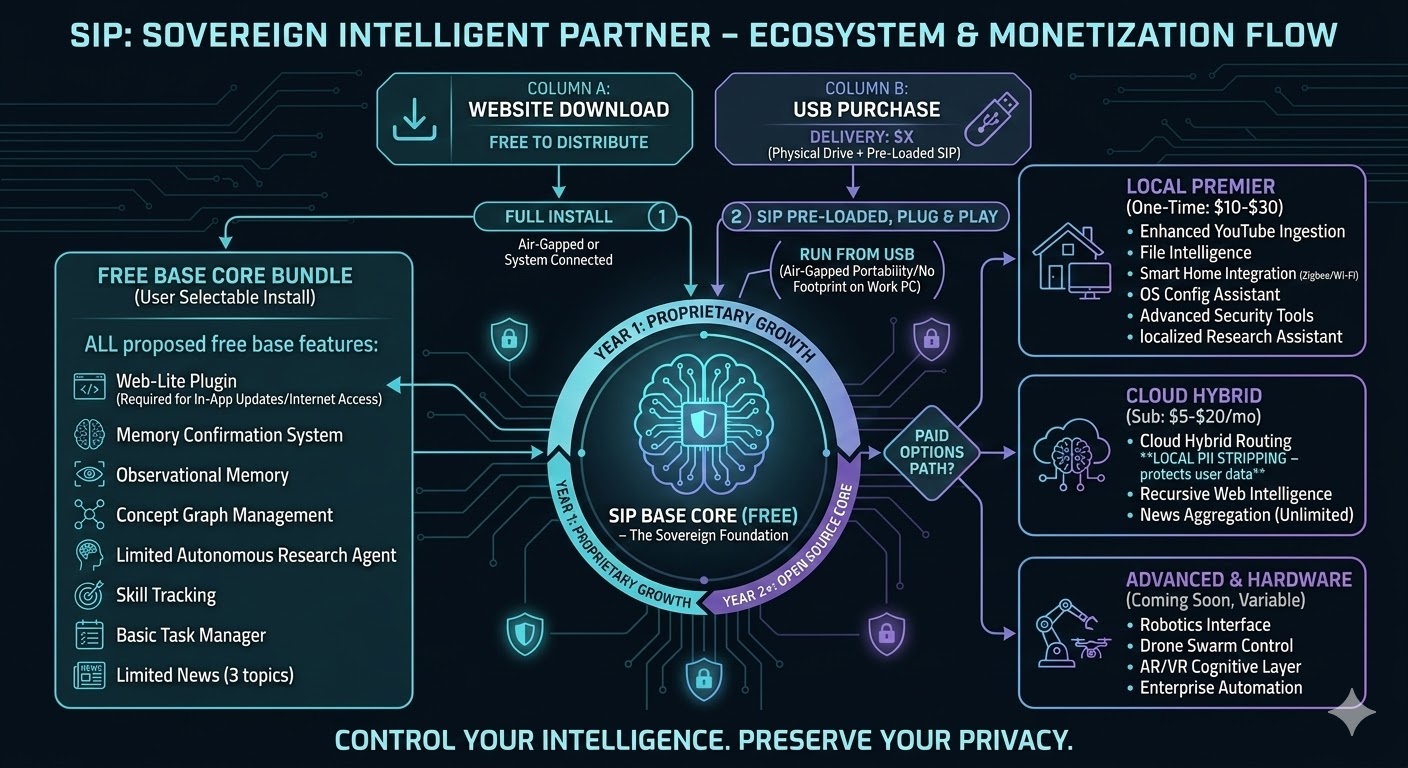

Welcome to the sovereign intelligence ecosystem. SIP is designed as a localized, private cognitive partner. In this Alpha phase, we prioritize making powerful reasoning models accessible on modest hardware.

Alpha Deployment Note: Basic familiarity with file navigation and executing files (.exe) is required.

1. Core SIP System

Source Repository (Git)

Access the raw Python codebase, schemas for the concept graph, and logic definition for the Belief System.

View Repo on GitHubSIP Core Installer (Alpha v0.8.2)

Windows 10/11 x64 | Size: ~500 MB

Includes pre-configured Python environment and essential dependency loaders.

Requires 8GB RAM minimum. A dedicated GPU is NOT required.

Download Core Installer (.exe)2. Choose Your Local Reasoning Model

These are optimized GGUF reasoning models curated for SIP. They are required to give SIP its inference capabilities.

SIP-Reasoning 8B Model (Meta Llama3)

Quantization: Q4_K_M | Size: ~4.9 GB

Optimized for accessibility. Successfully deployed on systems with 8GB RAM (No GPU required).

Download 8B GGUFSIP-Reasoning 14B Model (Mistral/Qwen)

Quantization: Q4_K_M | Size: ~9 GB

Balanced choice for 16GB RAM systems. Provides improved conceptual linking.

Download 14B GGUFSIP-Reasoning 40B Model (Cohere)

Quantization: Q3_K_M | Size: ~24 GB

High complexity reasoning. Requires 32GB+ RAM. CPU latency will be high.

Download 40B GGUF